-

PDF

- Split View

-

Views

-

Cite

Cite

Shira Baror, Biyu J He, Spontaneous perception: a framework for task-free, self-paced perception, Neuroscience of Consciousness, Volume 2021, Issue 2, 2021, niab016, https://doi.org/10.1093/nc/niab016

Close - Share Icon Share

Abstract

Flipping through social media feeds, viewing exhibitions in a museum, or walking through the botanical gardens, people consistently choose to engage with and disengage from visual content. Yet, in most laboratory settings, the visual stimuli, their presentation duration, and the task at hand are all controlled by the researcher. Such settings largely overlook the spontaneous nature of human visual experience, in which perception takes place independently from specific task constraints and its time course is determined by the observer as a self-governing agent. Currently, much remains unknown about how spontaneous perceptual experiences unfold in the brain. Are all perceptual categories extracted during spontaneous perception? Does spontaneous perception inherently involve volition? Is spontaneous perception segmented into discrete episodes? How do different neural networks interact over time during spontaneous perception? These questions are imperative to understand our conscious visual experience in daily life. In this article we propose a framework for spontaneous perception. We first define spontaneous perception as a task-free and self-paced experience. We propose that spontaneous perception is guided by four organizing principles that grant it temporal and spatial structures. These principles include coarse-to-fine processing, continuity and segmentation, agency and volition, and associative processing. We provide key suggestions illustrating how these principles may interact with one another in guiding the multifaceted experience of spontaneous perception. We point to testable predictions derived from this framework, including (but not limited to) the roles of the default-mode network and slow cortical potentials in underlying spontaneous perception. We conclude by suggesting several outstanding questions for future research, extending the relevance of this framework to consciousness and spontaneous brain activity. In conclusion, the spontaneous perception framework proposed herein integrates components in human perception and cognition, which have been traditionally studied in isolation, and opens the door to understand how visual perception unfolds in its most natural context.

In stark contrast to typical studies in vision, most perceptual experiences are spontaneous in that they are free from task constraints and are paced by the observer as an autonomous agent.

Recent studies utilizing naturalistic stimuli in task-free viewing paradigms, and separately, studies exploring the temporally dependent nature of cognitive dynamics, begin to elucidate how spontaneous perception may unfold in the brain.

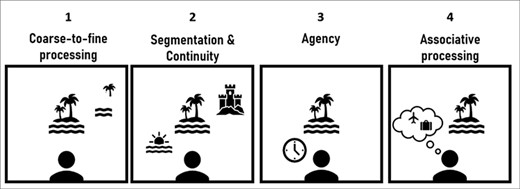

Building upon this evidence, we propose that spontaneous perception may be guided by several organizing principles. These include coarse-to-fine processing, mechanisms of segmentation and continuity, agency-related mechanisms, and associative processing.

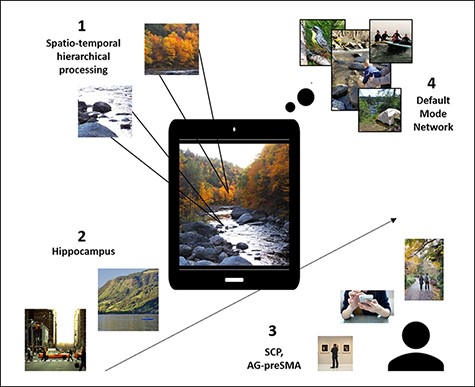

We propose that slow cortical potentials and the default-mode network are pivotal to spontaneous perception and further discuss the importance of unpacking spontaneous perception to understand out daily conscious visual experience.

What is spontaneous perception?

Flipping through images on Instagram, or walking through a museum, people continuously choose to engage and disengage from visual content. But imagine that when you go through your social media feed, images are presented to you for a fixed duration or that you are required to categorize them along one specific dimension (e.g. is that cute cat bigger or smaller than a shoebox?). The visual experience in these rather puzzling scenarios is quite different from how we spontaneously interact with our visual environment, in which we are free to determine the duration of each experience and free to engage with it without specific instructions. These aspects that highlight the spontaneous nature of perception have been largely overlooked in most experimental endeavors and are at the focus of this article.

In ‘spontaneous perception’ we refer to task-free and self-paced perception. Theoretically, in the absence of externally imposed temporal structure or task requirements, spontaneous perception may demonstrate considerable variability or even randomness. Furthermore, due to its experimentally uncontrolled nature, study of spontaneous perception is inherently challenging. The more degrees of freedom participants have when taking part in perceptual experiments, the more challenging the analyses may get. Nevertheless, there is a recent surge in studying perception under free-viewing conditions, manipulating naturalistic stimuli (Sonkusare et al. 2019) in task-free settings (e.g. movie viewing). Still, the study of perception that is both task-free as well as self-paced has been scarce. Considering this gap, much is still unknown about how spontaneous perception unfolds in the brain. Here we first propose that spontaneous perception is guided by several organizing principles that grant it temporal and spatial structures (Fig. 1). We build upon what is already known about task-free and/or self-paced perceptual processes, their temporal dynamics, and the ways contents evolve over time within them. Based on this evidence, we further draw predictions regarding the neural mechanisms that may sustain spontaneous perception (Fig. 2) and we conclude by highlighting several outstanding questions for future research, such as the relation between conscious and unconscious processing in spontaneous perception (Box 1).

Proposed organizing principles of spontaneous perception.

An outline of ongoing spontaneous perception and the hypothesized mechanisms involved.

The relation between conscious and unconscious processing in spontaneous perception is a fascinating avenue for future research. Unconscious processes are predominantly studied using paradigms that manipulate stimulus visibility (or audibility, etc.), such as threshold-level perception, masking, or interocular suppression. As spontaneous perception involves temporally extended and dynamic engagement with the sensory input, we posit that unconscious processes may influence spontaneous perception in several manners.

First, unconscious processing may be expressed prior to visual input through spontaneous neural activity. A wealth of findings using trial-based paradigms shows that pre-stimulus spontaneous activity influences subsequent conscious perception and perceptual recognition (e.g., Baria et al. 2017; Podvalny et al. 2019; Li et al. 2020; Glim et al. 2020; Samaha et al. 2020) and subsequent memory of events (Sadeh et al. 2019). Using naturalistic stimuli, Cohen et al. (2020) show that pre-stimulus activity contributes to memory of freely viewed clips by enhancing perception-related processing and reducing perception-unrelated processing. Therefore, preceding spontaneous neural activity may influence the content one engages with during spontaneous perception. Additionally, given that pre-stimulus activity can reflect one’s attention or arousal state (Jensen et al. 2012; Podvalny et al. 2019), it may also influence the self-paced characteristic of spontaneous perception, to the extent that endogenous neural states influence engagement duration.

Second, we proposed that initial conscious recognition during spontaneous perception is first achieved at the global level rather than the local level (e.g. Mona Lisa’s face is consciously perceived before the curve of her smile). How unconscious low-level processing influences gist-level conscious spontaneous perception is not fully understood. Furthermore, we have suggested that in spontaneous perception, coarse-level recognition is followed by dynamically focusing on different parts of the visual input. Which parts of the input are consciously perceived at any given moment and which remain unconsciously represented are important open questions. Interestingly, a recent study shows that perceptual echoes (∼10 Hz oscillation triggered by visual input), which are discussed here as a possible continuity mechanism and are triggered both by conscious and unconscious inputs during binocular rivalry (Luo et al. 2021). It is therefore possible that unconscious processing during spontaneous perception also involves mechanisms of continuity, which may interact with conscious processing.

Lastly, we suggested that spontaneous perception is characterized by extensive associative processing. The content that reaches conscious awareness may switch from one association to another (e.g. Who does the Mona Lisa remind you of? When was the first time you saw this painting? etc.). At any given moment some associations remain unconscious. What determines which generated associations are consciously experienced first and how unconscious associations influence conscious processing are important open questions as well. In addition, a recent study shows that pre-stimulus activity is associated with mind wandering during a perceptual task (Zanesco et al. 2020). This sets the stage to further explore whether pre-stimulus unconscious processing biases which associations will consequently become conscious during spontaneous perception.

Principle 1: spontaneous perception is constructed by coarse-to-fine, hierarchical levels of processing

The sensory environment encompasses visual characteristics that can be processed at multiple levels, from low-level features such as orientation and color, to high-level features such as categorical and schematic information. Do all processing levels contribute equally to spontaneous perception?

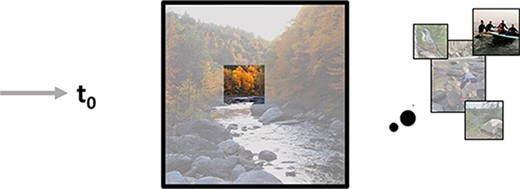

Based on recent evidence from studies employing free-viewing conditions, here we propose that while all processing levels contribute to spontaneous perception, conscious spontaneous perception is primarily achieved at the coarse level of processing. We further propose that, provided its temporally extended nature (from seconds to minutes), initial coarse-level spontaneous perception is followed by dynamic interactions between representation of various categories and between local and global processing levels.

Evidence for the involvement of low-level features in spontaneous perception comes from free-viewing paradigms showing that early information accumulation processes, measured by the distribution of saccades, is influenced by low-level features. For example, when freely viewing an image, participants shift their gaze behavior toward exploration when image size increases, and perform more extensive sampling by speeding fixation rate at the expense of fixation duration (Gameiro et al. 2017). Similarly, introducing disparity information during free-viewing leads to increased fixation rate as well as to shorter and faster saccades (Jansen et al. 2009).

While low-level processing is apparent under free-viewing conditions, here we propose that conscious experience during spontaneous perception is governed by the higher, schematic, and abstract information levels. This idea is in line with several theories of perception that propose coarse-to-fine processing as a principle. The Reverse Hierarchy Theory about perceptual learning and conscious perception (Hochstein and Ahissar 2002; Ahissar and Hochstein 2004) suggests that although incoming sensory information can comprise detailed local representation, one would consciously perceive the global representation first, after which conscious perception of fine-grained details will take place if necessary. The Contextual Guidance Model of visual search (Torralba et al. 2006) suggests that during perception of natural images, both contextual guidance and image saliency drive global and local processes, respectively, but that early contextual guidance efficiently constrains local processing. In the context of spontaneous perception, this model is supported by findings showing that fixation duration during free-viewing of images is better predicted by the image’s distribution of semantic information than its distribution of low-level saliency (Henderson and Hayes 2017). Contextual meaning accounts for fixation distribution better than feature salience during free-viewing (Walker et al. 2017; Peacock et al. 2019), and this predominance of coarse-level processing is further boosted when perceptual agency is maximized (by allowing head and body movement in a virtual reality environment, Haskins et al. 2020). The focus on context-level information measured by saccades was recently found to correspond with activity in higher-order, scene-selective regions (Henderson et al. 2020), demonstrating coarse-level prioritization in combined neuroimaging and eye tracking.

The idea of contextual guidance resonates with the object recognition account proposed by Bar et al. (2006). This account involves a two-stage processing model, according to which during object recognition first a coarse representation of the input is triggered by low spatial frequencies to the orbitofrontal cortex, and next, this process activates a narrow set of predictions regarding the object’s identity, guiding subsequent recognition in the fusiform gyrus. This model of processing low followed by high spatial frequencies (i.e. coarse-to-fine processing) has been supported in scene (Musel et al. 2014; Hansen et al. 2018) as well as in face perception (Petras et al. 2019; Dobs et al. 2019). Along similar lines, the coarse-vividness hypothesis (Campana and Tallon-Baudry 2013) proposes that subjective experience is first sustained by coarse information processing, which can then become more vivid by a later refinement stage of processing the details, suggested to grant conscious experience qualities such as visual intensity or visual specificity (Fazekas et al. 2020). Supporting this hypothesis, a recent study showed that when asked about their spontaneous perceptual experience, participants indicate that they first perceive the global rather than local feature of presented images (Campana et al. 2016).

Going beyond initial recognition, when one is free to determine the duration of their perceptual experience, engagement with visual information extends over longer timescales, ranging from seconds to minutes. Duration may be correlated with the complexity of the perceptual stimulus (Hegdé and Kersten 2010; Marin and Leder 2016) and may also depend on observer-specific factors (e.g. expertise; Brieber et al. 2014). Given this extended temporal scale, we propose that after initial coarse-level processing, spontaneous perception involves dynamic interactions between different levels of processing and, between different perceptual categories. This is demonstrated by findings showing that within a scene or a schema, categorical information (e.g. faces and language) is represented in category-selective regions during free-viewing of movies, and that this selective neural activation correlates with the objective intensity of each perceptual category present on screen (Bartels and Zeki 2004, 2005). Social categories are also dynamically represented during task-free perception, primarily in dorsomedial prefrontal cortex (Wagner et al. 2016). Furthermore, during prolonged free-viewing of static images, eye movements shift between local and global scanning patterns (Tatler and Vincent 2008). In a similar manner, recent work on episodic recall suggests that free recall of episodes begins with coarse construction of the represented scene and is followed by a subsequent elaboration stage—with detailed information rapidly represented in category-selective brain regions (Gilmore et al. 2021). Weaving these pieces of evidence together, we suggest that while spontaneous perception is constructed at all processing levels, conscious spontaneous experience takes a global-to-local path such that first one is conscious of gist information after which local details are consciously perceived.

The coarse-to-fine principle applies not only to the hierarchical construction of the visual experience, but also to the temporality of the experience. It is found that the functional cortical hierarchy is mirrored in a similar temporal hierarchy: visual cortex is sensitive to rapid changes, representing short events, while higher-level areas that process schematic information accumulate information over longer timescales (Bartels and Zeki 2004b; Hasson et al. 2008). Building upon these conceptualizations of chrono-architecture in the brain, findings support a coarse-to-fine temporal precedence in task-free settings. For example, using data-driven approach in movie free-viewing settings, Baldassano et al. (2017) show that activity in higher-order areas such as the medial prefrontal cortex and the angular gyrus (AG) correspond with relatively long events, and, importantly, that these processing scales match human annotated events better than activity in sensory areas. In addition, Baldassano et al. (2018) found that activity in default-mode network (DMN) regions (e.g. the medial prefrontal cortex and the superior frontal gyrus) abstract away from modality-specific features during task-free perception (i.e. while hearing auditory narratives or watching movies), suggested to represent schematic information that is broader than the local features involved. Together, these findings showcase the predominance of coarse-level processing in both the spatial and temporal domains.

It should be further noted that in all free-viewing studies reviewed above, the duration of engagement with the perceptual stimulus was predetermined, either by the temporal structure of a movie or by the researchers’ trial design. Therefore, with the existing free-viewing literature (of both static images and continuous movies), whether and how coarse-grained and fine-grained levels of processing guide self-paced behavior remains an important question for future research. It is possible that similar to the coarse-to-fine processing trajectory found from the onset of visual stimuli onward, studies focusing on task-free perception that is also self-paced will reveal a tractable trajectory pertaining to the processing levels that are engaged when transitioning from one image to another.

In summary, spontaneous perception is suggested to involve all levels of visual processing, from the accumulation of content at the local feature level, to global processes in which the perceptual information undergoes abstraction and is schematically represented in higher-order brain regions. We suggest that this hierarchical build-up is reversed within conscious experience of spontaneous perception—namely, that one is first conscious of the schematic, coarse-level information, which is followed by engagement in the local and category levels of processing. The dynamic interactions between these different processing levels are hypothesized to influence the duration and termination of the perceptual experience in self-paced contexts.

Principle 2: spontaneous perception is sustained by temporal mechanisms of continuity and segmentation

Spontaneous perception is an ongoing experience, as the natural stream of visual input is not a priori segmented in time; it is also an autonomous experience, as agents set their own pace in the perceptual environment. Combining these two elements, here we integrate perception-focused studies, which show that contextual changes and transitions in continuous or sequential inputs initiate perceptual segmentation (and subsequent memory), with agency-focused studies, which attest to mechanisms of the volitional pacing of experience. We propose that spontaneous perception inherently involves continuity and segmentation that are driven by the input’s properties, the observer’s volition, and the interaction between the two.

‘Event segmentation’ relates to the ways in which continuous or sequential experiences transform into distinct episodic representations, separated by ‘event boundaries’ (Zacks et al. 2007; Zacks 2020). Theories of event segmentation propose that significant contextual changes and/or decreased predictability of the perceptual input trigger segmentation (Schapiro et al. 2013; Radvansky and Zacks 2017; Clewett et al. 2019).

A prominent brain region that is often proposed to underlie event segmentation is the hippocampus, which is notoriously known for its role in pattern separation (Yassa and Stark 2011). Hippocampal activity increases during moments of significant or surprising changes during movie viewing, and this sensitivity to event boundaries is modulated by boundaries’ saliency (Ben-Yakov and Henson 2018). However, segmentation is not limited to the hippocampus but is found in multiple neocortical regions as well (e.g. AG, parahippocampal cortex, and posterior medial cortex), whose activity corresponds with human annotation of segmented events (Baldassano et al. 2017). These high-level boundaries are associated with a subsequent peak in hippocampus activity, which subserves post-event memory encoding (Sols et al. 2017; Silva et al. 2019). Segmentation under free-viewing condition, therefore, is supported by multiple brain regions at varying timescales. Integrating the principle of segmentation with the temporal hierarchy account (Hasson et al. 2008) and the Reverse Hierarchy Theory of perceptual processing (Ahissar and Hochstein 2004), we suggest that segmentation during spontaneous perception is firstly governed by coarse visual information at coarse temporal scales, after which perceptual events at finer temporal scales can trigger more local segmentation. Supporting this suggestion are findings showing that activity during memory recall of movie viewing correlated with activity patterns during online viewing in higher-order brain regions (largely overlapping the DMN) at coarse timescales (Chen et al. 2017), implying that memory formation relies primarily on segmentation between extended temporal windows of perception.

Mechanisms of segmentation in spontaneous perception are complemented by mechanisms of continuity. One neural substrate that has been suggested to index perceptual continuity over long timescales is slow cortical potentials (SCPs), which refer to an ongoing low-frequency (<5 Hz), aperiodic component of brain field potentials (He and Raichle 2009). SCPs are found to correlate with the spontaneous neural activity recorded by functional magnetic resonance imaging within intrinsic large-scale networks (He et al. 2008), both of which contain temporal correlations in the order of seconds to minutes (He et al. 2010; He 2011). It is shown that SCP’s temporal structure contains nested, cross-frequency interactions with higher-frequency bands (He et al. 2010; He 2014). This is crucial for sustaining spontaneous perception that integrates and segments events over a range of timescales. Lastly, as we elaborate in the next section, spontaneous perception integrates sensory/perceptual mechanisms with associative and agency-related processes; thus, the flow of information between large-scale brain networks is a necessary prerequisite for spontaneous perception to emerge, and SCPs, given their ability to synchronize across long distances (He and Raichle 2009), seem ideally suited to temporally sustain this form of perception. Notably, a previous proposal that neural processes indexed by SCPs underlie conscious awareness (He and Raichle 2009) is supported by studies on conscious perception (Douglas et al. 2015; Baria et al. 2017) and states of consciousness (Bourdillon et al. 2020). This implies that the dynamics of SCPs are part of the neural signatures of conscious perception (for an extended discussion on how unconscious processes may influence spontaneous perception, see Box 1).

Furthermore, in the context of visual processing, SCPs have been suggested to contribute to serial dependence in perception (Huk et al. 2018), a well-established phenomenon demonstrating correlations between consecutive perceptual events in trial-based experiments (Fischer and Whitney 2014), thereby promoting perceptual stability and continuity. Various features of the perceptual input are found to elicit serial dependence, including orientation (Fischer and Whitney 2014), spatial location (Feigin et al. 2021), and face identity (Liberman et al. 2014). These features are disentangled in laboratory experiments but are intertwined in natural environments, and often change conjointly. In fact, shared contexts seem to boost serial dependence (Fischer et al. 2020). In light of their role in synchronizing across regions and networks, possibly forming an ‘internal neural context’ (but note that perceptual contents can also be decoded from SCPs), SCPs may promote perceptual continuity when sustaining dependence in multiple features that synchronously change over time. Serial dependence is rarely studied in the context of spontaneous perception, but some evidence shows that during free-viewing conditions, successive saccades and fixations demonstrate dependencies (Tatler and Vincent 2008). It is therefore possible that serially dependent eye-movement patterns in spontaneous perception may also have contributions from SCPs. Combining the serially dependent nature of perception with the mechanisms of segmentation discussed above, it would be of value to explore the conditions in which self-paced perceptual behavior demonstrates continuity vs. segmentation.

While we propose that SCPs may sustain continuity over relatively long timescales, other mechanisms have been suggested to sustain perceptual continuity at faster timescales. One such mechanism is ‘perceptual echoes’, ∼10-Hz alpha oscillations that are triggered by visual input and last for ∼1 second, proposed to maintain perceptual information over that time frame (VanRullen and MacDonald 2012). This phenomenon is suggested to facilitate an ongoing sense of perceptual consciousness, by bridging discontinuities caused by the discrete, periodic sampling of information (Busch and VanRullen 2010; VanRullen 2016). Taken together, much like that segmentation takes place over a hierarchy of temporal windows (Hasson et al. 2008), it is plausible that a similar hierarchy exists in temporal continuity mechanisms, with perceptual echoes sustaining continuity between short-lived events and SCPs contributing to continuity at longer timescales.

Segmentation and continuity in typical experiments are predominantly proposed to arise from the stimulus’s properties (e.g. predictability within its context). Yet, being a self-paced experience, spontaneous perception may also inherently involve volition-based segmentation and continuity mechanisms. Supporting this suggestion, increases in SCP precede volitional action (e.g. Douglas et al. 2015) and convergent findings demonstrate a slow build-up of blood-oxygen-level-dependent (BOLD) activity preceding free and spontaneous creative behavior (Broday-Dvir and Malach 2021). Along these lines, we propose that in self-paced spontaneous perception, continuity and segmentation may be sustained by SCP build-ups and phase resets, respectively.

Taken together, we suggest that continuity and segmentation in spontaneous perception involve higher-order brain regions such as the DMN and low-frequency brain activity embodied in SCPs. Importantly, consistent with the hierarchy of timescales across cortical regions (Murray et al. 2014; Hasson et al. 2015), DMN activity has been shown to exhibit slow timescales in free-viewing paradigms. The processes of continuity and segmentation can be influenced by stimulus-driven factors as well as by volitional mechanisms. To expand on the latter, in the next section we discuss how spontaneous perception—as a self-governed experience—intrinsically involves the sense of agency.

Principle 3: agency is key in spontaneous perception

Given that spontaneous perception pertains to task-free processing of visual content in a self-governing context, we propose that the role of agency comes into play both in the ‘what’ as well as in the ‘when’ in spontaneous perception.

In typical perceptual experiments, even those that are task-free, the onset and duration of the perceptual experience are determined by the experimenter. In contrast, spontaneous perception involves a self-governing characteristic that grants it a sense of agency—a sense of control over one’s actions and consequences (Haggard 2017). Multiple studies in the field of self-initiated actions point to the inferior parietal lobule (IPL) and specifically to the AG as the key brain region related to the sense of agency (Farrer and Frith 2002; Farrer et al. 2008; Khalighinejad and Haggard 2015). The AG is sensitive to delays between actions and feedback in multiple sensory modalities (Van-Kemenade et al. 2017; Uhlmann et al. 2020), proposed to be a ‘comparator’ that facilitates the sense of agency by monitoring action–perception relations. In parallel to its role in action-related agency, the AG is a core region in the DMN, which is robustly implicated with spontaneous cognition (Christoff et al. 2016). Considering these evidences, we propose that the AG is a key hub to sustain agency in spontaneous perception.

Agency is a closely related concept to volition. Volition refers to the ability to decide whether to act, how to act, and when to act (Haggard 2008). The key features of volition include being spontaneous, conscious, and goal-directed (Fried et al. 2017)—features that also apply to the self-initiated, self-directed, and self-terminated aspects of spontaneous perception. Volitional actions are orchestrated by a tightly interconnected brain network including the IPL/AG, supplementary motor area (SMA) and pre-SMA, and motor and pre-motor areas (for reviews, see Haggard 2008; Desmurget and Sirigu 2012; Fried et al. 2017). Within this network, converging evidence suggests that the IPL/AG places an especially important role in generating conscious motor intention prior to movement onset (Desmurget et al. 2009; Desmurget and Sirigu 2012; Douglas et al. 2015), the SMA/pre-SMA plays a role in releasing the motor/pre-motor cortex from the inhibition by basal ganglia more proximal to movement onset (Fried et al. 2011; Fried et al. 2017), and the IPL/AG plays an additional role in generating the sense of agency as discussed above.

How is volition involved in spontaneous perception? We propose that volition informs spontaneous perception through the motorically refined calibration of eye movements. Indeed, some studies show that volitional and reflexive eye movements are sustained by different mechanisms (Deubel 1995), and animal studies show that neurons in the pre-SMA are selectively activated when an animal switches from reflexive to volitional saccades (Hikosaka and Isoda 2008). In humans, volitional saccades demonstrate greater activity in the frontal eye field compared with reflexive saccades (Henik et al. 1994), and the opposite contrast shows differences in the AG and precuneus brain regions (Mort et al. 2003; Schraa-Tam et al. 2009).

Connecting the principle of segmentation and continuity to volition and agency in spontaneous perception, it is reasonable to assume that segmentation based on observer-dependent volition and segmentation based on stimulus-driven predictability will be associated with different eye-movement patterns and correspondingly with different pre-SMA and AG activities.

Furthermore, rather than being passively drawn to salient stimuli in the environment, recent findings support the idea that eye movements reflect an active process of information sampling, subserving instrumental goals such as uncertainty reduction and reward maximization (Donnarumma et al. 2017; Gottlieb and Oudeyer 2018). This framework further suggests that in the absence of a specific task, active sampling is carried out in an exploratory manner, allowing information gathering that reflects a state of non-instrumental curiosity (Gottlieb and Oudeyer 2018; Van Lieshout et al. 2020). Active sampling that is motivated by such a state of curiosity, by definition, cannot be measured in controlled settings and depends on one’s agency and volition in actively determining the focus of attention and in moving the eyes accordingly (Gottlieb and Oudeyer et al. 2013). Connecting this line of research with the coarse-to-fine processing principle, we expect that after initial coarse-level perceptual processing, subsequent dynamic sampling of information is guided by one’s uninstructed, self-determined intrinsic motivation until a decision is made to conclude the perceptual experience. Naturally, such dynamics can only be explored when agency is maintained in the experimental setup. Future studies that investigate self-paced perception in task-free settings will help understand how active sampling strategies are influenced by agency-related mechanisms.

Principle 4: spontaneous perception relies on associative processing

Lastly, we describe the principle of associative processing in spontaneous perception. We propose that in the absence of task requirements, spontaneous perception goes beyond the visual experience to involve wide-ranging associative processing that cannot be fully captured in paradigms that use controlled visual presentations. Associative processing supports spontaneous perception at several temporal scales. First, associative processing may support immediate recognition processes, forming a top-down predictive mechanism that guides recognition of incoming perceptual information in light of existing priors (O’Callaghan et al. 2017). This process is likely more pronounced when sensory input contains ambiguity, as often is the case for vision in the natural environment (Wang et al. 2013). At an intermediate timescale, associative processing between prior and current perceptual events may help sustain perceptual continuity, as discussed in the section on segmentation and continuity mechanisms. Finally, associative processing at the long timescale connects sensory information with long-term past experiences, triggering mental representations that one engages with in the post-recognition stage. These include non-visual associations that are stored in memory, such as knowledge-based, valence-based, or self-referential associations elicited by the visual stimulus. Associative processing in spontaneous perception thus contributes to one’s extended, individualized, and dynamic engagement with the percept. This perceptual stage cannot be fully understood when the duration of image presentation is controlled by the researcher. Nevertheless, some studies that manipulate free-viewing conditions with extended presentation duration begin to elucidate this process, as discussed next.

One example for the contribution of associative processing to spontaneous perception is valence-based associations. Findings show that when letting participants self-pace their perceptual experience, the duration of image-viewing increases with the extraction of aesthetic evaluation (Brieber et al. 2014), demonstrating that beyond sensory features, valence-based information triggered by the visual input influences self-paced behavior. Similarly, Subramanian et al. (2014) found that valence influences eye movements in free-viewing, such that gaze patterns are less dispersed and more focused toward indicative features when viewing emotional movie clips compared with neutral ones. Furthermore, when watching emotional scenes, participants better remember scene gist at the expense of peripheral details. This may suggest that extracting emotion-based associations interacts with the coarse levels of processing, such that affective associations promote coarse over fine perceptual processing. The link between visual and affective associations is further exemplified in findings showing that increased associativity of visual objects and increased affective value converge on a shared cortical substrate in the orbitofrontal cortex (Shenhav et al. 2013; Chaumon et al. 2014), an area that is sensitive to the intersection among perception, memory, and affect and has been suggested to be pivotal for prediction generation by rapidly processing the low spatial frequencies of visual input (Bar et al. 2006).

The primary neural network that has been suggested to sustain associative processing is the DMN, which has been implicated in domain-independent, associative, and predictive processing (Bar et al. 2007; Stawarczyk et al. 2019). The DMN’s activity pattern reflects prior knowledge-guided perceptual disambiguation, consistent with its situation at the intersection between perception and memory (Gonzales-Garcia et al. 2018; Flounders et al. 2019). Increased DMN activity is found when perceiving artwork that is rated as highly aesthetically pleasing (Vessel et al. 2012) and when perceiving pleasing stimuli including natural landscape and architecture (Vessel et al. 2019). Furthermore, in exploring the dynamics of DMN during free-viewing of artistic paintings (although not self-paced), it was recently shown that DMN activity increases over the first few seconds of viewing an aesthetically pleasing painting (Belfi et al. 2019). Taken together, it is reasonable to expect that affordance of various forms of associations (e.g. aesthetic associations or self-referential associations) influence the temporal dynamics of the DMN during self-paced spontaneous perception.

In summary, we propose that spontaneous perception is inherently influenced by associative processing that range from rapid pre-recognition processes, through mid-timescale associations that link consecutive events during perception, to long-range associations eliciting information stored in long-term memory and affective systems. We predict that this associative processing is primarily supported by DMN activity (see Box 2 for a closer inspection of the multiple possible roles the DMN may take in spontaneous perception).

Spontaneous perception encompasses several key processes that are associated with the DMN. DMN activity is robustly linked with spontaneous thoughts taking place in the absence of task demands (Mason et al. 2007). It is also associated with perceptual disambiguation by utilizing priors (Gonzales-Garcia et al. 2018), event model representation (Stawarczyk et al. 2019), and cognitive abstraction (Margulies et al. 2016). The AG, which is discussed here primarily in the context of agency in spontaneous perception, is a core hub of the DMN, supporting cross-modal information integration (Andrews-Hanna et al. 2014). DMN activity has also been suggested to underlie domain-general associative processing (Bar et al. 2007), spanning from self-referential associations (Vessel et al. 2013) and episodic memories (Schacter et al. 2007) to aesthetical pleasantness (Vessel et al. 2013). Additionally, it has been shown that the DMN is sensitive to the level of vivid detail in experience during spontaneous cognition and task contexts (Sormaz et al. 2018; Smallwood et al. 2021). These various accounts of DMN are all related to the core principles of spontaneous perception. Therefore, to better pinpoint the role of DMN in the experience of spontaneous perception, future studies should examine how activity patterns in the DMN, as a whole as well as within its subparts, and dynamic interactions between the DMN and other neural networks are modulated by the above-mentioned aspects (i.e. abstract vs. detailed representations, continuity and segmentation, the agency of the observer, and the affordance of associations that the perceptual input prompts).

Outstanding questions

What are the temporal dynamics of spontaneous perception? Tracking the temporal dynamics relating to the onset and offset of percepts may reveal a unique temporal signature of self-paced perception.

How do we spontaneously segment perceptual experience into discrete events? It is currently unknown how mechanisms that pertain to the state of the observer (e.g. volition) interact with stimulus-driven factors (e.g. contextual changes) in guiding self-segmentation in spontaneous perception.

How do different neural networks interact during spontaneous perception? The interaction between visual processing, volitional mechanisms, and associative processes in spontaneous perception is yet unknown.

How is spontaneous perception influenced by spontaneous neural activity? The influences of intrinsic, ongoing fluctuations in spontaneous neural activity on spontaneous perception remain poorly understood (see also Box 1).

Implications for future research

The main challenge in studying spontaneous perception is the immense inter- and intra-individual variability that is automatically introduced when spontaneous behavior is allowed. It is thus not surprising that empirical studies in which perception is both task-free and self-paced have been scarce. Nevertheless, overcoming this challenge may bear several important implications for future research.

First, the spontaneous perception framework proposed herein derives its hypotheses from substantial knowledge gained from prior studies using typical laboratory setting paradigms, which aim to isolate key components of perception and cognition. Future research that allows for self-paced and task-free perception will help to test the generalization of this valuable knowledge in more ecologically valid scenarios, where perceptual and cognitive systems likely interact more intimately and seamlessly.

Second, beyond increased ecological validity, it is possible that in some respects, spontaneous perception will be found to be inherently different from perceptual processing operationalized in controlled, task-based laboratory settings. For example, by definition, studies in which trials are randomized disrupt the role contextual continuity may play in perception and, similarly, studies that control stimuli’s timing neutralize the role of agency in the temporal dimension. Therefore, studying spontaneous perception is expected not only to elucidate whether findings from prior research apply to naturalistic settings, but may also shed light on mechanisms that controlled laboratory-based experiments cannot resolve, mechanisms that naturally work closely in tandem, and mechanisms that possibly play a larger role in perception than previously shown.

Third, during spontaneous perception, the boundary between perception and cognition may become fuzzy and a two-way bridge may emerge between the two. For example, integrating the course-to-fine principle and the associative processing principle, it is not unlikely that during spontaneous perception, initial recognition triggers associative processing or even mind wandering, which then dictates subsequent information sampling and perceptual focus. Such bi-directional interactions between externally triggered and internally generated processes, and their possible functional roles, are often overlooked but can be studied under the spontaneous perception framework.

Lastly, some perceptual phenomena may gain new meaning when viewed under the spontaneous perception prism. For example, serial dependence can be interpreted as a sub-optimal bias induced by past events, but in the context of spontaneous perception, it reflects the importance of contextual continuity. Similarly, DMN activity during resting states may seem puzzling when not subserving the task at hand, but considering the abundance of associative processing one engages with in spontaneous settings, this resting-state activity may in fact reflect a mechanism that sustains the richness of our perceptual experiences and integrates them with past events.

Concluding remarks

For decades, perception has been studied by controlled experiments in laboratory settings, largely overlooking the task-free and self-paced nature of perception. Recently, a paradigm shift is observed, as more and more studies employ naturalistic stimuli and ‘free-viewing’ designs. Still, a theoretical scheme for how spontaneous perception unfolds has yet to be provided, partly because studies that allow self-paced behavior are scarce. Here we propose a framework for spontaneous perception. We define spontaneous perception as task-independent, self-paced perceptual processing and propose that spontaneous perception is guided primarily by four principles: spatiotemporal coarse-to-fine processing, continuity and segmentation, agency and volition, and associative processing. We hope that by putting the spontaneous perception framework to test, this avenue of research will advance our understanding of visual perception in its most natural context.

Data Availability

The current article does not introduce new data but rather proposes a theoretical framework for studying spontaneous perception.

Funding

This work was supported by National Science Foundation grants (BCS-1926780 and BCS-1753218) and an Irma T. Hirschl Career Scientist Award (to BJH).

Conflict of interest statement

None declared.

References

Author notes

Biyu J. He, http://orcid.org/0000-0003-1549-1351